We live in a time where the phrase “artificial intelligence” (called AI for short) is trendy and appears in the marketing descriptions of many products and services. But what is precisely AI?

Broadly speaking, AI originated as an idea to create artificial “thinking” along the lines of the human brain.

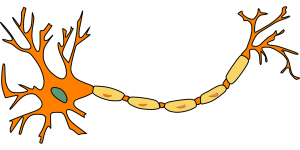

As of today, however, we can only make assumptions about how the human brain works, primarily based on medical research and observation. From a medical point of view, we know that the brain looks like a complex network of connections in which neurons are the main element and that our thoughts, memory, and creativity are a flow of electrical impulses. This knowledge has given hope to construct an analogous brain in an electronic version, either hardware or software, where neurons are replaced by electronics or software. However, since we are not 100% sure exactly how the brain works, all current models in AI are certain mathematical approximations and simplifications, serving only certain specific uses. Nevertheless, we know from observation that it is possible, for example, to create solutions that mimic the mind quite well – they can recognize the writing, images (objects), music, emotions, and even create art based on previously acquired experiences. However, the results of the latter are sometimes controversial.

This burgeoning field of artificial intelligence has given rise to extensive philosophical discussions about AI and its implications on society, ethics, and the nature of intelligence itself. Philosophers and technologists alike grapple with questions surrounding the consciousness of AI systems, the ethical ramifications of creating entities capable of making decisions, and the potential for AI to surpass human intelligence. These discussions are not merely academic; they touch on the very essence of what it means to be human, challenging our understanding of creativity, morality, and the value of human experiences in an increasingly automated world.

What else does AI resemble the human brain in?

Well… it has to learn! AI solutions are based on one fundamental difference from classical algorithms: the initial product is a philosophical “tabula rasa”, or “pure mind”, which must first be taught.

In the case of complex living organisms, knowledge emerges with development: the ability to speak, to move independently, to name objects, and in the case of humans and some animal species, there are elements of learning organized in kindergartens, schools, universities, and during work and independent development. Analogously in most artificial intelligence solutions – the AI model must first receive specific knowledge, most often in the form of examples, to be able to later function effectively as an “adult” algorithm. Some of the solutions learn once, while others improve their knowledge while functioning (Online Learning, or Reinforced Learning). It vividly resembles the human community: some people finish their education and work for the rest of their lives in one company doing one task. Others have to train throughout their lives as their work environment changes dynamically.

The business landscape is undergoing a major transformation thanks to artificial intelligence, which is being used to automate tasks and identify new growth opportunities. Recently, a group of 6 entrepreneurs on how AI is revolutionizing their business operations, showcased examples with one using chatbots powered by AI to improve customer service and another utilizing machine learning algorithms to optimize their supply chain management. These examples showcase the versatility of AI and its potential to enhance a wide range of business functions. As AI continues to evolve, it will be fascinating to see how businesses of all sizes and industries integrate it into their operations. Their insights provided a glimpse into the possibilities of this emerging field, where AI is not just streamlining existing business processes, but also creating new opportunities for innovation and growth. AI’s continued advancement and accessibility will undoubtedly lead to exciting new applications and possibilities for businesses across a wide range of industries.

Is AI already “smarter” than humans?

As an interesting aside, we can compare the “computing power” of the brain versus the computing power of computers. It, of course, will be a simplification because the nature of the two is quite different.

First, how many neurons does the average human brain have? It was initially estimated to be around 100 billion neurons. However, according to recent research (https://www.verywellmind.com/how-many-neurons-are-in-the-brain-2794889), the number of neurons in the “average” human brain is “slightly” less, by about 14 billion, or 86 billion neuronal cells. For comparison, the brain of a fruit fly is about 100 thousand neurons, a mouse 75 million neurons, a cat 250 million, a chimpanzee 7 billion. An interesting fact is an elephant’s brain (much larger than a human in terms of size), which has … 257 billion neurons, which is definitely more than the brain of a human.

It was initially estimated to be around 100 billion neurons. However, according to recent research (https://www.verywellmind.com/how-many-neurons-are-in-the-brain-2794889), the number of neurons in the “average” human brain is “slightly” less, by about 14 billion, or 86 billion neuronal cells. For comparison, the brain of a fruit fly is about 100 thousand neurons, a mouse 75 million neurons, a cat 250 million, a chimpanzee 7 billion. An interesting fact is an elephant’s brain (much larger than a human in terms of size), which has … 257 billion neurons, which is definitely more than the brain of a human.

From medical research, we know that for each neuron, there are about 1000 connections with neighboring neurons or so-called synapses, so in the case of humans, the total number of connections is around 86 trillion (86 billion neurons * 1000 connections). Therefore, in simplified terms, we can assume that each synapse performs one “operation”, analogous to one instruction in the processor.

From medical research, we know that for each neuron, there are about 1000 connections with neighboring neurons or so-called synapses, so in the case of humans, the total number of connections is around 86 trillion (86 billion neurons * 1000 connections). Therefore, in simplified terms, we can assume that each synapse performs one “operation”, analogous to one instruction in the processor.

At what speed does the brain work? In total … not much. We can determine it based on BCI type interfaces (Brain-Computer Interface), which not so long ago appeared as a result of the development of medical devices for electroencephalography (EEG), such as armbands produced by Emotiv, thanks to which we can control the computer using brain waves. Of course, they do not integrate directly with the cerebral cortex but measure activity by analyzing electrical signals. Based on this, we can say that the brain works at variable speed (analogous to the Turbo mode in the processor), and it is between 0.5Hz for the so-called delta state (complete rest) and about 100Hz for the gamma state (stress, full tension).

Thus, we can estimate the maximum computational power of the brain as 8.6 billion operations (8.6*10^15) or 8.6 Petaflops! Despite the relatively slow performance of the brain, this is a colossal number thanks to the parallelization of operations. From Wikipedia (https://en.wikipedia.org/wiki/Supercomputer), we learn that supercomputers did not break this limit until the first decade of the 21st century. The situation will change with the advent of quantum computers, which inherently work in parallel, just like the human brain. However, as of today, quantum computing technology for cyber threat hunting is still in its infancy.

In conclusion, at the moment, AI has not yet overtaken the human brain, but it probably will someday. However, we are only talking about learning speed here, leaving aside the whole issue of creativity, “coming up with” ideas, emotions, etc.

AI and mobile devices

Artificial intelligence applications require substantial computational power, especially at the so-called learning stage, and pose a significant challenge in integrating them with AR and VR solutions. Unfortunately, AR and VR devices mostly have very limited resources, as they are effectively ARM processor-based mobile platforms comparable in performance to smartphones. As a result, most artificial intelligence models are so computationally (mathematically) complex that they cannot be trained directly on mobile devices. OK – you can, but it will take an incredibly and unacceptably long time. So in most cases, to learn models, we use powerful PCs (clusters) and GPU gas pedals, mainly Nvidia CUDA. This knowledge is then “exported” into a simplified model “implanted” into AR and VR software or mobile hardware.

In our next blog post, you’ll learn how we integrated AI into VR and AR, how we dealt with the limited performance of mobile devices, and what we use AI for in AR and VR.